Do researchers still need to take notes in interviews?

AI can’t (yet) capture all the data you need.

These days, every video-calling tool transcribes your interviews. Some do it badly (Teams), some do it well (pretty much all the others), but transcription is basically a given.

So why would anyone still take notes in user research?

There isn’t really an established convention here. Some researchers use a dedicated notetaker so they can focus on moderating. Others take their own notes, but how they do it comes down to personal preference. Most people default to whatever they did last time without thinking about it much.

Let’s break this topic down into three questions:

- Given AI transcription, does anyone need to take notes at all?

- If yes, should it be the moderator or someone else?

- If the moderator, how should they do it?

Does anyone need to take notes at all?

Automatic transcription is a massive timesaver, but the reality is that transcripts only capture what was said. Depending on the type of research you’re running, that will represent different degrees of what was communicated.

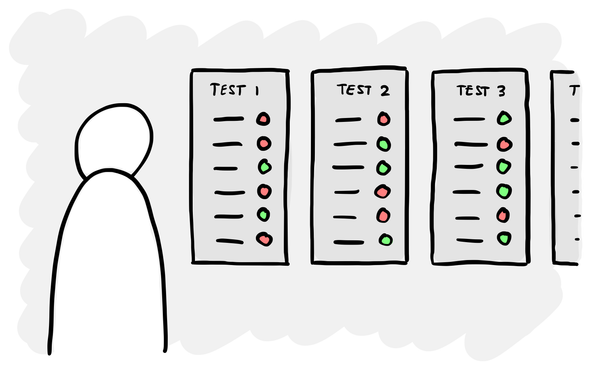

In formative research where there’s no prototype and nothing shown on a screen, a good transcript covers most of what matters. It doesn’t capture how something was said, body language and so on, but you might get 80% of the meaning with a literal recording of the words that were spoken.

In evaluative research, transcripts only represent a fraction of the data – you might as well not bother analysing them in some cases. What someone did with the prototype, where they hesitated, which buttons they hovered over, the small sigh before they gave up: none of this is in the transcript. If your only record is what was said, your analysis will be flawed.

Across all types of research, someone has to capture the data that AI can’t (yet) record on its own.

Another good reason for both moderators and observers to take notes – even if it’s being perfectly recorded by AI – is that writing down the key points keeps you more engaged and helps you remember what happened. This is true for any communication, not just user research.

Should the moderator take notes?

Some researchers delegate note-taking, getting someone else to take notes so they can focus on the conversation. There’s a case for this, especially if you’re new to moderating and find the two things hard to combine. But I still think moderators should take notes.

You have the best vantage point, especially in-person. You can see the screen from where they see it. You notice the small body language, the hover before the click. You’re closest to the participant and most present in the conversation. A notetaker on a gallery view or behind a one-way mirror is getting a flatter version of the session, and their notes will reflect that.

This is even more important if you are going to use AI to help you analyse the findings. Anything that is not in the transcript – body language, hesitations, pass/fail, the click that didn’t happen – needs to be captured as structured data and the moderator is in the best position to do that.

The ‘it splits your attention’ objection is valid, but it’s an argument about training, not whether the thing is possible. With practice you learn to write without looking, develop shorthand and pace the interview to leave space for writing things down. It’s a craft skill: hard at first, then automatic.

How should they do it?

There are a whole range of ways that moderators can take notes:

- Free-form on a blank page or in a file. The simplest option. Good for very unstructured exploratory work when you don’t know what’s coming.

- In a spreadsheet. Columns for participant, rows for topics. Structured and easy to process later.

- Annotating the discussion guide. Pen on printed paper (old school!) or as a PDF on an iPad. Notes and questions live in the same place.

- In a Notion database. Templates can make this easier than a spreadsheet. Useful when you want structured data points like scores or pass/fail.

- On a Miro/FigJam board. Sticky notes per topic or per participant. Good for analysis, but trickier to do live.

Each has is pros and cons. Most researchers only ever reach for one or two, and tend to default to the same method on every project regardless of what it actually needs.

Structure your notes to structure your data

Whichever method you pick, the more structure you build in, the easier the analysis will be. If you have to sit down with 12 sets of completely free-form notes and try to compare what people said about one topic, it’s going to take a long time. The points are in there, but you’re hunting across pages of unrelated stuff. When you structure your notes, they’re much faster to work with.

A rule of thumb: is there something about each session you’d want to analyse later that won’t be in the transcript? Body language, hesitations, a pass or fail, a score out of ten, the fact that someone clicked the wrong thing three times before noticing. If the answer is yes, you need a way to capture it.

What this looks like depends on the method. If you’re annotating a discussion guide, don’t just leave blank space to write in: add boxes for the specific things you want to capture at each step. If you’re using a spreadsheet, add more rows for specific data points. If you’re using a Notion database, define the properties you’ll fill in as you go, so the fields do the remembering for you. Then when you come to do analysis, it’ll be much easier for both you and your AI assistant.

The data AI can’t see

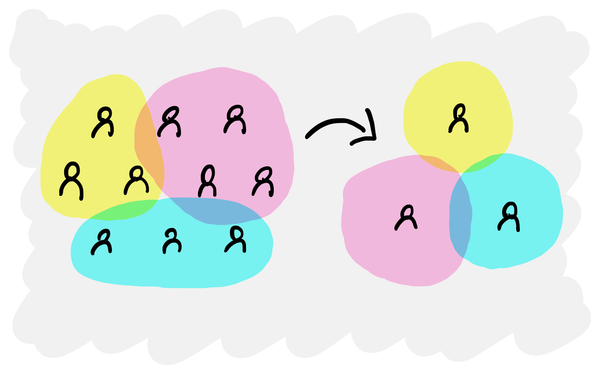

With the tools we now have, it’s tempting to dump every transcript into AI and ask it to do all the analysis for you. With clean human-edited transcripts and a million-token context window, an LLM can produce a decent set of themes from interviews faster than anyone could do by hand.

But you only get good results if the data going into the LLM is complete. Transcripts plus structured notes can give you a close-to-complete dataset. Transcripts on their own, for anything more behavioural than a formative chat, is a partial one.

The further your research sits from pure speech, the more weight your notes carry. In usability testing, the notes are the data. In formative interviews, the transcript does more of the heavy lifting, but the notes still hold the things you only understood because you were running the interview.

So before your next study, don’t just default to your usual way of taking notes. Think about what data you’ll need at the other end, and design your notes around it. The analysis (human or AI) will be much better for it.